Mitigating AdWords Click Fraud

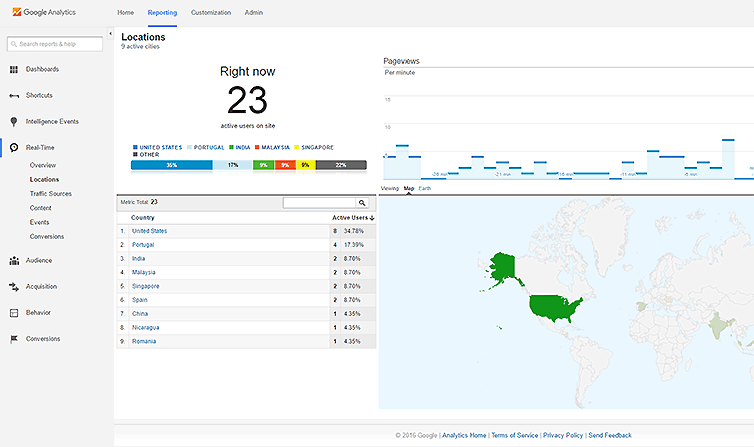

Every now and then there is an hours-long campaign of fraudulent AdWords-clicking from countries all over the world, ranging from Iran to Singapore, dedicated to clicking my cost-per-click Google ads in a vain attempt to exhaust a given daily budget early. My hat goes off to the chap for organizing the attack, or at least knowing a botnet operator and getting operation time.

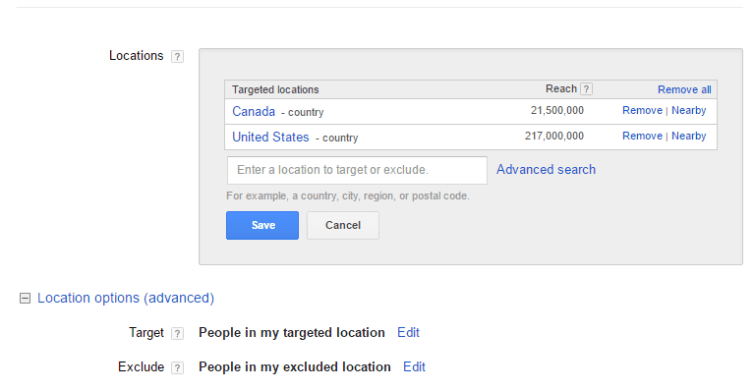

Fortunately right off the bat Google knows that any country excluding the US and Canada are not supposed to be served ads. Their internal filters recognize this and won’t charge me for clicks from those excluded regions.

1) Exclude regions in AdWords campaigns

An Adwords specialist on the phone first guided me through setting the countries to be included, and to exclude all others. This alone was a valuable tip as a first-time click-fraud target.

2) Prevent bots from clicking goal URIs

I found that these bots mess up my valuable statistics data, like the time of day most visitors come, when (genuine) goals are reached, how long people actually stay on the site, etc. A lot of this can be filtered, especially because I have traps in my Google Tag Manager tags to help me identify real visitors. For example, I have a scrolling tag that fires when the page is scrolled. Bots don’t scroll. To make filtering easier, I take advantage of Cloudfare’s geolocation header – $_SERVER["HTTP_CF_IPCOUNTRY"] – to identify the incoming connection. Then I have this in my htaccess file:

1 2 3 4 5 6 7 8 | # 412 Errors ErrorDocument 412 /fraudadclick/ # Block bad AdWords clicks RewriteCond %{QUERY_STRING} ^gclid\= [NC] RewriteCond %{HTTP:CF-IPCountry} !^$ [NC] RewriteCond %{HTTP:CF-IPCountry} !(CA|US)$ [NC] RewriteRule .* - [L,R=412] |

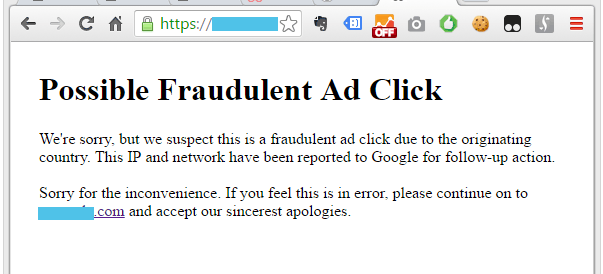

This checks for the telltale Google click containing ?gclid= and checks that if a country header is present, and it isn’t the US or Canada, then server a 412 error with the page located at /fradulentadclick/. What’s in that directory you ask? A nice warning to the attacker.

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 | <!DOCTYPE html> <html lang="en"> <head> <meta charset="UTF-8"> <title>Fraudulent Ad Click</title> </head> <body style="padding:40px"> <!-- Google Tag Manager --> <noscript><iframe src="//www.googletagmanager.com/ns.html?id=GTM-OOOOOO" height="0" width="0" style="display:none;visibility:hidden"></iframe></noscript> <script>(function(w,d,s,l,i){w[l]=w[l]||[];w[l].push({'gtm.start': new Date().getTime(),event:'gtm.js'});var f=d.getElementsByTagName(s)[0], j=d.createElement(s),dl=l!='dataLayer'?'&l='+l:'';j.async=true;j.src= '//www.googletagmanager.com/gtm.js?id='+i+dl;f.parentNode.insertBefore(j,f); })(window,document,'script','dataLayer','GTM-OOOOOO');</script> <!-- End Google Tag Manager --> <h1>Possible Fraudulent Ad Click</h1> We're sorry, but we suspect this is a fraudulent ad click due to the originating country. This IP and network have been reported to Google for follow-up action. <br><br> Sorry for the inconvenience. If you feel this is in error, please continue on to <a href="https://yoursite.com">yoursite.com</a> and accept our sincerest apologies. </body> </html> |

The GTM snippet is included so I can still see the pretty graph of real-time visitors and awe at the effort some malcontent has put into trying to sabotage one of my campaigns. However, I can go a step further.

3) Detect nighttime bots

The bots from the US and Canada are a problem because it’s difficult to distinguish between real and malicious traffic. One trick I use is to trap bots that click on my ads outside of the ad schedule. For example, I have ads turned off from 1 am to 8 am for a given site. This htaccess code helps.

1 2 3 4 | # Block out-of-schedule Adwords clicks RewriteCond %{QUERY_STRING} ^gclid\= [NC] RewriteCond %{TIME_HOUR} ^(01|02|03|04|05|06|07) RewriteRule .* - [L,R=412] |

This server is located in Mountain Time, so the accuracy fluctuates with daylight savings, but it’s synchronized to the AdWords campaign schedule which matches the timezone of the server. This filters out night attacks, which Google will happily filter out and should not cost me anything, and again is an effort to keep my traffic statistics useful.

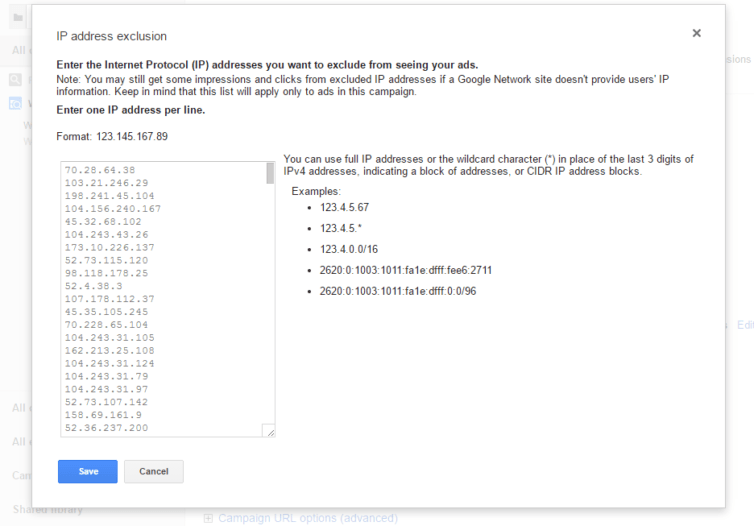

4) Filter out IPs that have clicked on ads previously

This is by far my favorite method which can be used across ad campaigns because bots tend to be reused. If I have successfully filtered out invalid countries, and invalid nighttime clicks, then I have to deal with daytime clicks and those IPs. The method I’ve settled on, which has the advantage that previous legitimate visitors won’t be shown my ads again, is to collect all the seemingly-valid-click log entries, grep them for the IPs, and make them into a unique list. What do I do with this list? Why, I give it to Google.

I won’t go into detail here because there is a lot of code involved, but I automated this process. I love building APIs and interacting with other APIs, so using cron jobs and some neat scripts I manage to collect the IPs from the logs, filter them, make them unique, and even sort them with natsort, then send them to my Google campaign using the AdWords API.

Gotchas

1) There is a 500-IP limit

There are some gotchas, however. Google is very kind as they will accept 500 IPs for exclusion (unlike Bing which only accepts 100). After about two weeks of my scripts running faithfully I found them producing closer and closer to the 500-IP limit. I had to modify my scripts to sort the IPs be frequency and truncate the list to the top 500. It’s not ideal, but it’s far better than sitting on my hands.

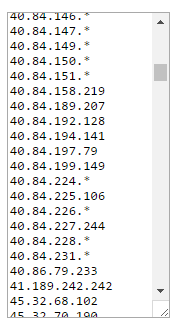

I thought I could do better, so my next attempt to modify my script resulted in a beautiful list of IPs and wildcard addresses like below.

I really enjoyed making the script that produced the above results. It took into account frequency and CIDR address blocks when calculating the wildcard IPs. Now I can get past the 500-IP limit. I find I’m not getting charged much and my daily budget doesn’t get exceeded artificially, and real visitors are still clicking through to my goal URIs. The attacks still come in, but I’m thankful to the fellow for giving me a security challenge that was enjoyable to work through, plus the satisfaction of winning.